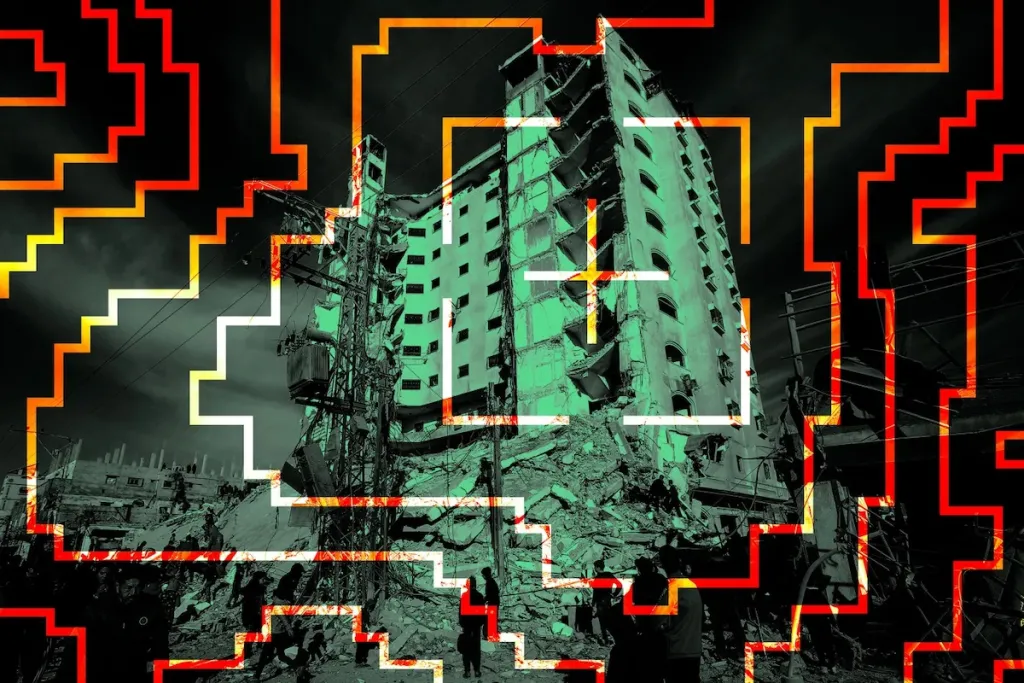

Israel uses AI system tested in Gaza against Iran: Experts sound the alarm

Israel uses AI technology tested - Israel's AI tested in Gaza - After Israel's unprecedented use of artificial intelligence (AI) to identify bombing targets in Gaza, experts are now expressing serious concerns about the lack of human oversight in Israel's AI-driven targeting operations in Iran.

Analysts, deeply analyzing the current situation, pay special attention to the potential dangers that this type of autonomous system can create. According to them, AI making decisions without human intervention can lead to unexpected and tragic consequences.

Trita Parsi, Executive Vice President of the Quincy Institute for Responsible Statecraft, noted on the X social media platform that “similarities between Israel's operations in Gaza and potential targeting in Iran are becoming increasingly apparent.” This comparison reveals deep concerns about the expanding role of artificial intelligence in military operations.

Experts raise the question of who will bear ethical and legal responsibility for artificial intelligence's targeting decisions. Without the human factor, it remains uncertain who will be responsible for errors or biased decisions made by autonomous systems.

The application of such technologies opens serious discussions in the context of international law and rules of war. There is a need for widespread consideration of the future consequences of using artificial intelligence in warfare and its compliance with humanitarian principles.

Analysts particularly emphasize the importance of transparency and human oversight in the development and use of military artificial intelligence systems. They call on the international community to approach this issue more sensitively and to form appropriate regulatory mechanisms.